SleekRank for benchmark pages

Maintain metric rows with definition, p25, p50, p75, segment breakdowns and methodology in one source. SleekRank renders one URL per metric — email-open-rate, lcp-score, saas-churn-rate — through one base template with quarterly refresh as a sheet workflow.

€50 off for the first 100 lifetime licenses!

Benchmarks are evergreen reference traffic

Benchmark pages — "average email open rate", "good LCP score", "typical SaaS churn" — earn evergreen links because teams cite them in slide decks and reports. The format barely changes across metrics: definition, percentiles, segment breakdowns, methodology, sources. The challenge is making refresh cycles cheap enough that the numbers stay current.

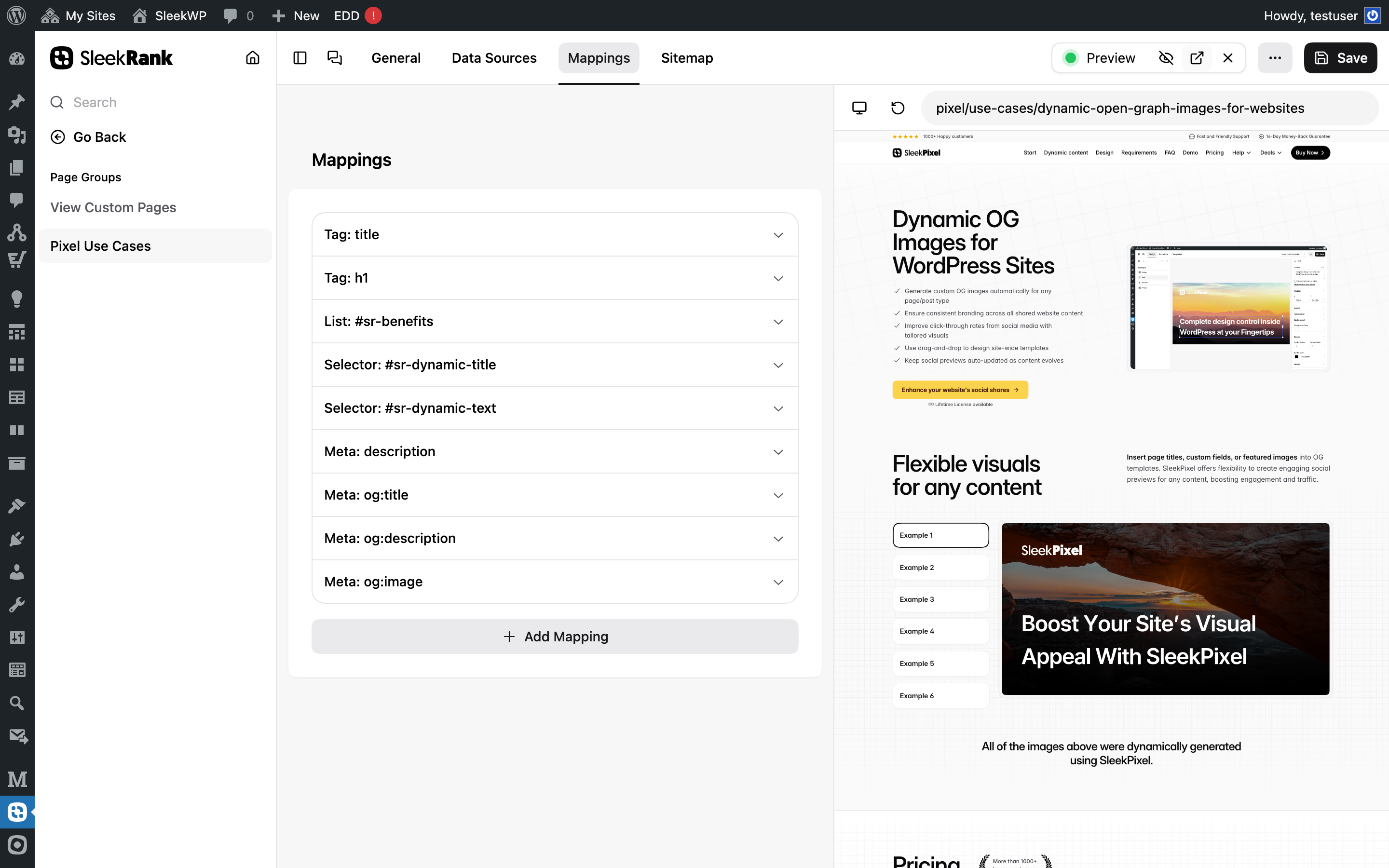

SleekRank treats each benchmark as a row. Definition, p25, p50, p75, segment breakdowns and methodology come from columns. The base /benchmarks/template/ renders the page through a consistent layout: hero with the metric and headline percentiles, a definition block, a percentile chart, a segment breakdown table and a methodology section. Refresh quarterly when new survey data lands; the URL pattern stays clean — /benchmarks/{slug}/.

Percentiles render with consistent formatting whether they're percentages (email open rate at 27% p50), seconds (LCP at 2.5s p50) or absolute numbers (CAC payback at 14 months p50). Tag mappings inject the values into structured cards on the base page. Segment breakdown rows render through a list mapping pointed at a table or card grid. Dataset JSON-LD on the base page reads the same data so structured-data freshness travels with the visual freshness automatically.

Workflow

From metric rows to citation-grade benchmarks

Build the metric source

Design /benchmarks/template/

Map percentiles and segments

Quarterly refresh ritual

Data in, pages out

Metric rows, benchmark pages out

One row per metric with slug, metric, definition, p25, p50, p75, segment breakdown and last-reviewed date.

| slug | metric | p25 | p50 | p75 |

|---|---|---|---|---|

| email-open-rate | Email open rate | 18% | 27% | 38% |

| lcp-score | LCP score | 3.4s | 2.5s | 1.8s |

| saas-churn-rate | SaaS monthly churn | 1.2% | 3.1% | 5.8% |

| ecommerce-conversion-rate | Ecommerce conversion rate | 1.1% | 2.4% | 4.0% |

| cac-payback | CAC payback (months) | 8 | 14 | 22 |

/benchmarks/{slug}/

- /benchmarks/email-open-rate/

- /benchmarks/lcp-score/

- /benchmarks/saas-churn-rate/

- /benchmarks/ecommerce-conversion-rate/

- /benchmarks/cac-payback/

Comparison

Hand-built benchmarks vs SleekRank

Hand-built benchmark posts

- Refreshing percentiles across many posts every quarter is tedious

- Methodology drifts between posts as authors update some, not all

- Segment breakdowns baked into copy are hard to revise

- FAQ schema added inconsistently across benchmarks

- Layout drifts between metrics as authors freelance

- No single source of truth for which benchmarks exist

SleekRank

- One base page renders every benchmark

- Percentiles live in dedicated columns

- Segment breakdowns come from list mappings

- Methodology lives once on the base page

- Quarterly refresh = sheet edit + one cache flush

- Pair with SleekPixel for per-metric OG images

Features

What SleekRank gives you for benchmark pages

Percentiles as data

p25, p50 and p75 live in dedicated columns. The base page reads them via tag mappings, so quarterly updates across email-open-rate, lcp-score and saas-churn-rate take seconds per metric.

Segment breakdowns

Segment-level rows or nested objects map to a list, so each segment renders as a row in a structured comparison table — by industry, company size or region — with consistent percentile columns.

Quarterly refresh

Update percentiles in the sheet when new survey data lands, flush the cache. Every benchmark page reflects the new figures and the new last-reviewed date without touching individual posts.

Use cases

Where benchmark libraries live on SleekRank

Marketing benchmarks

Per-channel benchmarks — email open rate, paid CPC, SEO CTR, social engagement — kept fresh with quarterly review cycles and segment breakdowns by industry vertical and company size.

SaaS benchmarks

Per-metric SaaS benchmarks — churn, NRR, CAC payback, ARR-per-employee — sourced from surveys or anonymized customer data. Segment by ARR band so early-stage and scale-up readers find their relevant slice.

Performance benchmarks

Per-metric web performance benchmarks — LCP, INP, CLS — published as canonical reference pages for engineers. Segment by industry and device type. Cross-link to Core Web Vitals documentation.

The bigger picture

Why benchmarks live or die on methodology

Benchmarks are reference content with unusually high stakes. Teams cite them in pitch decks, board reports and investor updates. A wrong p50 isn't an embarrassment — it's a real-world cost when a CFO uses your benchmark to set a target.

That makes methodology disclosure the single most important element on the page, and consistency of methodology across metrics the single most important quality of the library. Hand-built benchmark posts struggle here. Different posts written by different authors disclose methodology differently.

Sample sizes drift. Some posts cite their data source, others don't. Segment breakdowns are present on some metrics, missing from others.

Over time, the benchmark library becomes inconsistent enough that sophisticated readers stop trusting it and start citing competitors. Treating benchmarks as data forces methodology consistency at the structural level. Methodology lives on the base page, identical for every metric.

Sample size lives in a column, surfaced systematically. Last-reviewed dates surface on every page, so freshness is a public commitment. Segment breakdowns follow a consistent schema — segment name, p25, p50, p75 — so readers know how to read every benchmark in the library.

The /benchmarks/ subdirectory becomes a citation-grade reference, which is the only kind worth publishing.

Questions

Common questions about SleekRank for benchmark pages

From your data — annual surveys you've run, anonymized customer data from your platform, public APIs (CrUX for Core Web Vitals, for example). SleekRank renders what you provide; sourcing is your team's job. Disclose the source on the methodology block of the base page once and every benchmark inherits it. The disclosure is what separates a citable benchmark from an unreliable one.

Yes. Store segment-level data as a nested array or related table — each segment as an object with name, p25, p50 and p75. Render via a list mapping pointed at a table body or a card grid. Common segments are industry, company size (ARR band, employee count) and region. Surface enough segments to be useful but not so many the page overwhelms the headline figure.

Add Dataset JSON-LD to the base page with name, description, distribution, includedInDataCatalog and dateModified. Inject the metric, p50, sample size and last-reviewed via selector or meta mappings. Dataset schema is one of the few schema types Google still actively features in dataset search results, so the structured data has real distribution value beyond rich snippets.

Each source has a configurable cacheDuration. Update the sheet, flush the cache (wp db query "DELETE FROM wp_319_sleek_rank_items"), and every benchmark page reflects the new percentiles and the new last-reviewed date. The freshness signal is both visible (the badge) and structured (dateModified in the Dataset JSON-LD), so reader trust and search engine signals stay aligned.

Two patterns work depending on scale. For two or three industries, use the segments array within each metric row and render as a breakdown table. For more — fifteen industries across thirty metrics — run separate page groups per industry with their own data sources, e.g. /benchmarks/saas/{slug}/ and /benchmarks/ecommerce/{slug}/. The latter scales to four hundred and fifty pages without bloating individual rows.

No. SleekRank reads from your data source. Collecting, anonymizing and verifying benchmark data is your responsibility. The structured approach makes it easier to manage that responsibility — sample sizes are explicit columns, last-reviewed is a real date, methodology lives once on the base page — but the underlying research and data integrity work is yours. Pair with a validator that flags suspicious rows before publication.

Add the relevant context as columns. "Email open rate by industry" is one segment dimension. "By list size" might be another. Render both breakdowns on the same page if they're both useful, or split into two metric rows if the context substantively changes the percentiles. Don't pretend one benchmark applies universally when segmentation reveals it doesn't — readers will catch it.

Yes. Add a related_product or use_case column and render it as a CTA on the page — "how to improve email open rate" linking to a product page or a how-to. SleekRank treats the column like any other field. Done well, this turns benchmark pages into top-of-funnel acquisition channels for product features that solve the gap between p50 and p75 performance.

Pricing

More than 1000+

happy customers

Explore our flexible licensing options tailored to your needs. Upgrade your license anytime to access more features, or opt for a lifetime license for ongoing value, including lifetime updates and lifetime support. Our hassle-free upgrade process ensures that our platform can grow with you, starting from whichever plan you choose.

Starter

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- websites

- 1 year of updates

- 1 year of support

Pro

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- websites

- 1 year of updates

- 1 year of support

Lifetime ♾️

Launch Offer

€299

EUR

once

further 30% launch-discount applied during checkout for existing customers.

- websites

- 1 year of updates

- 1 year of support

...or get the Bundle Deal

and save €250 🎁

The Bundle (unlimited sites)

Pay once, own it forever

Elevate your WordPress site with our exclusive plugin bundle that includes all of our premium plugins in one package. Enjoy lifetime updates and lifetime support. Save significantly compared to buying plugins individually.

What’s included

-

SleekAI

-

SleekByte

-

SleekMotion

-

SleekPixel

-

SleekRank

-

SleekView

€749

Continue to checkout