SleekRank for LLM API comparisons

Keep LLM APIs and pairs as rows, and SleekRank generates /llm/{model}/ and /llm/{a}-vs-{b}/ pages from your existing WordPress template, with input price, output price, context window, and benchmark scores pulled from one source.

€50 off for the first 100 lifetime licenses!

LLM pricing and limits move on the vendor's calendar

LLM APIs change pricing, context windows, and rate limits constantly. A GPT, Claude, Gemini, or Mistral comparison written last quarter is likely wrong on per-million-token cost, output cap, or tool-use behavior. Developer-facing publications and affiliate review sites end up with dozens of pages whose pricing tables disagree with what the provider's pricing page shows today.

SleekRank reads one source, a sheet of models with name, vendor, input_price_per_million, output_price_per_million, context_window_tokens, max_output_tokens, modalities, function_calling flag, and benchmark scores. It drives per-model pages at /llm/{model}/ and pair pages at /llm/{a}-vs-{b}/ from the same row data. The base page is a normal WordPress page, and row values fill in the pricing table, context block, and benchmark grid.

Input and output pricing is the field readers compare hardest, and it is the one that drifts fastest. When OpenAI cuts GPT prices or Anthropic tweaks Claude tiers, every page quoting the old number goes stale within hours. Stored as columns for input_price and output_price, the template renders both via tag mapping, computes blended cost at a quoted ratio, and one sheet edit propagates the change across every per-model and pair page on the catalog.

Workflow

From model sheet to per-model and head-to-head pages

Build the model sheet

Wire the model template

Add a pairs page group

Refresh on pricing or release news

Data in, pages out

Model matrix in, API comparison pages out

| slug | model | input_price_per_m | output_price_per_m | context_tokens |

|---|---|---|---|---|

| gpt-4o | GPT-4o | 2.50 | 10.00 | 128000 |

| claude-3-5-sonnet | Claude 3.5 Sonnet | 3.00 | 15.00 | 200000 |

| gemini-1-5-pro | Gemini 1.5 Pro | 1.25 | 5.00 | 2000000 |

| mistral-large | Mistral Large 2 | 2.00 | 6.00 | 128000 |

| llama-3-1-405b | Llama 3.1 405B (Bedrock) | 5.32 | 16.00 | 128000 |

/llm/{slug}/

- /llm/gpt-4o/

- /llm/claude-3-5-sonnet/

- /llm/gemini-1-5-pro/

- /llm/gpt-4o-vs-claude-3-5-sonnet/

- /llm/claude-3-5-sonnet-vs-gemini-1-5-pro/

Comparison

Hand-edited LLM reviews versus one synced matrix

Manual model reviews

- Per-token prices drift faster than editors can patch pages

- Context window claims disagree across pages on the same site

- Benchmark scores go stale after every model release

- Adding a new model means writing a stack of new pages

- Function-calling support changes rarely propagate to reviews

- Pair page verdicts fall out of step with per-model facts

SleekRank

- One row drives the per-model page and every pair

- Pricing columns flow through to all cost comparisons

- Context window and output cap stay consistent everywhere

- Benchmark grid renders from a structured column set

- Cache flush updates every page after a sheet edit

- Sitemap reflects current models as the matrix evolves

Features

What SleekRank gives you for LLM API comparisons

Pricing in one place

Input and output per-million-token prices inject into pricing tables across the catalog, so a vendor cut is one row edit instead of a sweep across per-model and pair pages.

Pair page support

A pairs page group joins two model rows into a /a-vs-b/ template, so head-to-heads stay in step with per-model pages, with side-by-side specs and a pair-specific verdict.

Benchmark grid

MMLU, HumanEval, GPQA, and other benchmark columns drive a per-model scorecard and a comparison grid on pair pages, so scores stay aligned across the review set.

Use cases

Who builds LLM API comparisons with SleekRank

Developer publications

Sites that cover the AI stack run a master matrix of model APIs, with pricing and capability columns driving every per-model page and head-to-head comparison.

AI consultancies

Consulting firms publish vendor comparison resources for clients picking models, with one sheet driving public reference pages used during architecture reviews.

Educators and researchers

Course authors and analysts maintain a public matrix of model specs for curriculum and reports, with rows updated each release cycle and pages following automatically.

The bigger picture

Why LLM API comparisons rot without a data layer

Developers picking an LLM API are sizing a real cost line: token volume times per-million-token price across a fleet of features. They want hard numbers, not adjective-heavy reviews, and the numbers move on the vendor's calendar, not the editor's. Hand-edited review pages drift on exactly the axes that matter most: input price, output price, context window, function calling support, modality coverage, and benchmark scores.

A GPT-4 page written before a price cut quotes a number the buyer can immediately falsify on the vendor's pricing page, and trust in the rest of the comparison evaporates. SleekRank pins these facts to a single row, so when a vendor changes pricing, every per-model and pair page updates after the next cache cycle. For a developer-focused publication, the result is a comparison catalog that stays credible across release seasons, instead of a snapshot that goes stale the week a major vendor ships an update.

Questions

Common questions about SleekRank for LLM API comparisons

Not directly. SleekRank renders from your data source. If the sheet is updated by a scraper, a connected script, or your editorial team on a regular cadence, the new numbers flow through on the next cache cycle. The data acquisition layer lives upstream of SleekRank, which is responsible for rendering whatever is current in the source consistently across solo and pair pages.

Both page groups read from the models sheet. The pairs group joins two rows at render time using a slug pair from a pairs sheet. A change to a model row updates every page that references the model, including per-model, pair, and any category roll-ups, after the cache window expires.

Define another page group with a different URL pattern, source from the same sheet, and filter on the use-case columns. A /llm/best-for-coding/ landing page becomes its own SEO target, with intro copy on the base page and the matching subset rendered from the source. The same approach works for cheapest, biggest context, multimodal, or open-weight cuts.

Yes. Add columns for open_weight flag and a side dataset keyed by model slug listing hosts and their per-token prices. The template can render a hosts table beneath the headline pricing block, joined at render time. Per-host price differences for Llama or Mistral families render cleanly without splitting the model into multiple rows.

Yes. The pairs sheet has its own verdict column. The per-model verdicts handle solo pages, and the pair verdict drives head-to-heads. If a pair row's verdict is empty, the template can fall back to a templated summary built from the two model rows' verdict snippets. Either way, you control the wording per pair when the comparison deserves it.

Add a deprecated flag and a successor_slug column. The template renders a deprecation banner via selector mapping when the flag is true, and the successor field links to the recommended replacement. Or drop the row entirely so the URL stops generating, and add a 301 redirect to the successor to preserve link equity for backlinks the deprecated model accumulated.

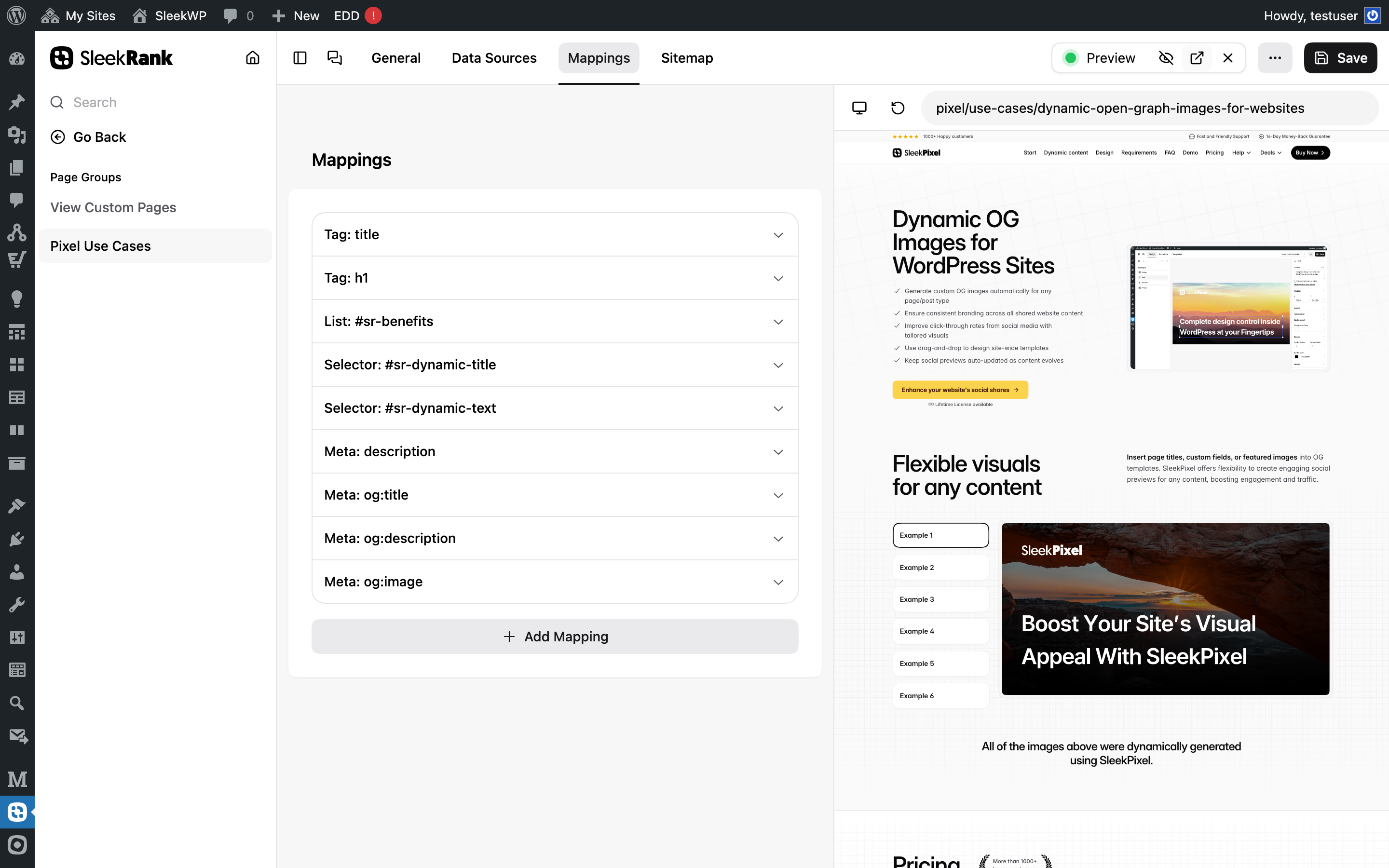

Yes. Map an image URL column to og:image with the meta type, so each per-model page renders its own social card. For per-pair pages, you can render both vendor logos side by side. Pairing with SleekPixel lets the OG image render on the fly from the row data, overlaying model name, input price, and context window on a styled background.

Store each benchmark as its own column (MMLU, HumanEval, GPQA, etc.) and keep a separate JSON file keyed by model slug for historical scores. The template renders current numbers from the main row and an optional history block from the side dataset. One sheet edit updates every page referencing the benchmark, with the historical context preserved for readers tracking model evolution.

Pricing

More than 1000+

happy customers

Explore our flexible licensing options tailored to your needs. Upgrade your license anytime to access more features, or opt for a lifetime license for ongoing value, including lifetime updates and lifetime support. Our hassle-free upgrade process ensures that our platform can grow with you, starting from whichever plan you choose.

Starter

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- 3 websites

- 1 year of updates

- 1 year of support

Pro

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- 1 year of updates

- 1 year of support

Lifetime ♾️

Launch Offer

€299

EUR

once

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- Lifetime updates

- Lifetime support

...or get the Bundle Deal

and save €250 🎁

The Bundle (unlimited sites)

Pay once, own it forever

Elevate your WordPress site with our exclusive plugin bundle that includes all of our premium plugins in one package. Enjoy lifetime updates and lifetime support. Save significantly compared to buying plugins individually.

What’s included

-

SleekAI

-

SleekByte

-

SleekMotion

-

SleekPixel

-

SleekRank

-

SleekView

€749

Continue to checkout