SleekRank for ML experiment tracker comparisons

Keep experiment trackers and pairs as rows, and SleekRank generates /experiment-trackers/{tracker}/ and /experiment-trackers/{a}-vs-{b}/ pages from your existing WordPress template, with framework support, artifact storage, self-hosting, and pricing pulled from one source.

€50 off for the first 100 lifetime licenses!

Experiment trackers reshape their feature lists every release

ML experiment trackers ship rapidly. Weights and Biases adds new integrations, MLflow promotes plugins to core, Neptune revises its team plan, and Comet adjusts its free-tier artifact quota in the same quarter. A review written six months ago is likely wrong on framework coverage, storage caps, or self-hosting terms. A site running per-tracker reviews plus head-to-heads accumulates dozens of pages whose spec tables fall behind the vendor's docs.

SleekRank reads one source, a sheet of trackers with name, supported_frameworks, artifact_storage_gb_free, self_hosting, team_seats_free, sso_support, sdk_languages, dataset_versioning, model_registry, monthly_price, and a verdict column. It drives per-tracker pages at /experiment-trackers/{tracker}/ and head-to-heads at /experiment-trackers/{a}-vs-{b}/ from the same row data. The base page is a normal WordPress page, and row values fill the framework chips, storage blocks, and verdict slot.

Framework coverage is the most volatile field. A new PyTorch Lightning callback or a JAX integration lands in a minor release, and every page listing supported frameworks needs a patch. Stored as one JSON column with framework slugs, list mapping renders the live framework grid on every page that references the tracker, with deprecated integrations flagged from a second column.

Workflow

From tracker sheet to per-tracker and pair pages

Build the tracker sheet

Wire the tracker template

Add a pairs page group

Refresh on release news

Data in, pages out

Tracker matrix in, ML tooling pages out

| slug | tracker | self_hosting | artifact_storage_gb_free | monthly_price |

|---|---|---|---|---|

| weights-and-biases | Weights and Biases | Enterprise only | 100 | $50 |

| mlflow | MLflow | Yes (OSS) | Self-managed | $0 |

| neptune | Neptune | Yes | 10 | $49 |

| comet | Comet | Yes | 100 | $39 |

| clearml | ClearML | Yes (OSS) | Self-managed | $0 |

/experiment-trackers/{slug}/

- /experiment-trackers/weights-and-biases/

- /experiment-trackers/mlflow/

- /experiment-trackers/neptune/

- /experiment-trackers/comet/

- /experiment-trackers/wandb-vs-mlflow/

Comparison

Hand-edited tracker reviews versus one synced matrix

Manual tracker reviews

- Framework integrations change faster than editors can patch pages

- Storage quotas disagree across pages on the same site

- Self-hosting options fall behind after licensing tweaks

- Adding a new tracker means writing a stack of pages

- SDK language support claims go stale between releases

- Pricing tier changes rarely propagate everywhere

SleekRank

- One row drives the per-tracker page and every pair

- Framework support and SDK languages flow through to all pages

- Storage and self-hosting columns stay aligned everywhere

- Pricing and seat columns sync across the catalog

- Cache flush updates every page after a sheet edit

- Sitemap reflects current trackers automatically

Features

What SleekRank gives you for ML experiment tracker comparisons

Framework matrix in one place

Supported frameworks as a JSON column render as a chip grid on every page that references the tracker, so a new Lightning or JAX integration is one row edit instead of a sitewide sweep of framework lists across solo and pair pages.

Head-to-head support

A pairs page group joins two tracker rows into a /a-vs-b/ template so head-to-heads stay in step with per-tracker pages, with side-by-side specs, framework overlap, and a matchup-specific verdict from the pairs sheet.

Self-hosting transparency

A self_hosting column with values like Yes, Yes (OSS), Enterprise only, and No renders consistently across pages, so teams evaluating air-gapped or on-prem deployments see the same disclosure shape everywhere in the catalog.

Use cases

Who builds ML experiment tracker comparisons with SleekRank

ML tooling publications

Editors maintain a master tracker matrix, and per-tracker plus head-to-head pages follow without separate edits, so a feature release propagates across the entire review set in one cache cycle.

MLOps education sites

Course publishers and bootcamp blogs keep a structured comparison of trackers used in lessons, with the same matrix powering buyer guides and module-by-module tool recommendations.

Developer affiliate sites

Affiliates earning on tracker referrals cover the long tail of per-tool and pair queries from one sheet, with spec columns kept aligned with each vendor's live docs.

The bigger picture

Why experiment tracker reviews rot without a data layer

ML engineers picking a tracker are deciding what their training runs, datasets, and model artifacts will lean on for the next year. Framework coverage, artifact storage, self-hosting, and the model registry shape are not marginal details, they are the entire reason an engineer reads a comparison instead of clicking install on the first option. Manual reviews drift on exactly these axes because vendors tune integrations and quotas on their own schedule, not the editor's.

A page claiming a tracker has no Lightning callback when it shipped one last week is wrong by the time it ranks, and the writer has no systematic way to find every related page that copied that gap. SleekRank pins the facts to a single row, so a release note is one column edit that propagates to every per-tracker page, every pair, and any framework roll-up after the cache cycle. For a tooling publication or developer affiliate site, the result is a comparison catalog that stays current long enough to convert ML engineers who care about the details, instead of one that decays in trust each release.

Questions

Common questions about SleekRank for ML experiment tracker comparisons

Not directly. SleekRank reads whatever is in the source sheet on the cache cycle, so the detection step is upstream. A small scraper or your editorial team monitors release notes and updates the matching columns. Once the row is updated, propagation across per-tracker and pair pages is automatic on the next cache flush.

Both page groups read from the same trackers sheet. The pairs group joins two tracker rows by slug at render time using a pairs sheet that holds the pair-specific verdict and head-to-head copy. A change to a tracker row updates every page that references it, including per-tracker, every pair containing that tracker, and any framework roll-ups.

Define another page group with a different URL pattern, source from the same sheet, and filter on the supported_frameworks JSON column. A /experiment-trackers/pytorch/ page becomes its own SEO target, with intro copy on the base page and the matching subset rendered from the source. The same pattern works for cuts on JAX, TensorFlow, scikit-learn, and Lightning.

Yes. Filter on self_hosting equal to Yes or Yes (OSS) and render a /experiment-trackers/self-hosted/ subset page. Per-tracker pages still cover the long tail, and the self-hosted page concentrates on the buyer who needs an air-gapped or on-prem deployment, with the same row data driving both views.

Yes. The pairs sheet has its own verdict and recommendation columns. The per-tracker verdicts handle solo pages, and the pair verdict drives head-to-heads. If a pair row's verdict is empty, the template can fall back to a templated summary built from the two trackers' solo verdicts, so coverage is guaranteed.

Add a status column with values like active, deprecated, and sunset. The template renders a banner via selector mapping when status is not active, and the page can either stay live with a historical note or 301 to the closest active alternative based on a recommended_replacement column. Either way, every page that referenced the tracker behaves consistently.

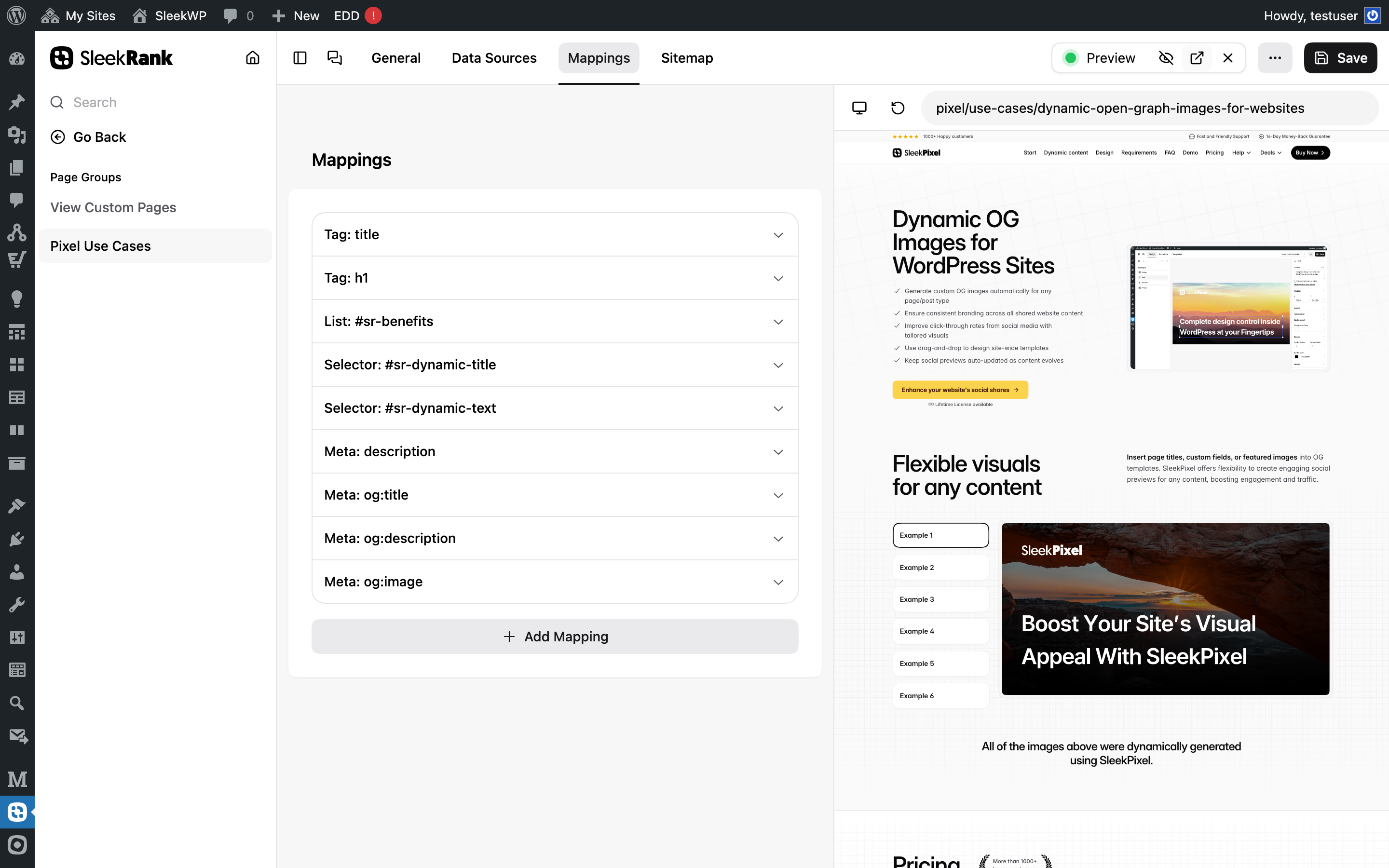

Yes. Map an image URL column to og:image with the meta type, so each per-tracker page renders its own social card. For pair pages, the template can compose a side-by-side OG. Pairing with SleekPixel lets the OG image render on the fly from the row data, overlaying tracker logos, top framework chips, and pricing on a styled background.

Add a code_examples JSON column keyed by language, with snippets per tracker. Per-tracker pages render the example matching the reader's selected language tab, and a small editorial check before each release ensures the snippets compile against the current SDK version. Once a snippet is updated in the row, every page using it inherits the fix.

Pricing

More than 1000+

happy customers

Explore our flexible licensing options tailored to your needs. Upgrade your license anytime to access more features, or opt for a lifetime license for ongoing value, including lifetime updates and lifetime support. Our hassle-free upgrade process ensures that our platform can grow with you, starting from whichever plan you choose.

Starter

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- 3 websites

- 1 year of updates

- 1 year of support

Pro

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- 1 year of updates

- 1 year of support

Lifetime ♾️

Launch Offer

€299

EUR

once

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- Lifetime updates

- Lifetime support

...or get the Bundle Deal

and save €250 🎁

The Bundle (unlimited sites)

Pay once, own it forever

Elevate your WordPress site with our exclusive plugin bundle that includes all of our premium plugins in one package. Enjoy lifetime updates and lifetime support. Save significantly compared to buying plugins individually.

What’s included

-

SleekAI

-

SleekByte

-

SleekMotion

-

SleekPixel

-

SleekRank

-

SleekView

€749

Continue to checkout