SleekRank for MLOps platform comparisons

Keep MLOps platforms and stacks as rows, and SleekRank generates /mlops/{platform}/ and /mlops/{stack}/ pages from your existing WordPress template, with deployment targets, monitoring, model registry, and pricing pulled from one source.

€50 off for the first 100 lifetime licenses!

MLOps platforms reshape their pipelines every quarter

MLOps platforms grow horizontally. SageMaker adds new inference flavors, Vertex AI promotes preview features to GA, Databricks ML extends model serving, and Kubeflow component versions tick forward. A roundup written last quarter is likely wrong on inference type, monitoring detector coverage, or which orchestrators a platform ships against. Sites running per-platform reviews and stack-based comparisons accumulate dozens of pages whose capability tables fall behind the vendor's release notes.

SleekRank reads one source, a sheet of platforms with name, deployment_targets, inference_modes, model_registry, monitoring_features, supported_orchestrators, fine_tuning_support, cloud_providers, byo_compute, pricing_model, and a verdict column. It drives per-platform pages at /mlops/{platform}/ and per-stack pages at /mlops/{stack}/ from the same row data. The base page is a normal WordPress page, and row values fill the capability tables, deployment chips, and verdict slot.

Monitoring coverage is the field that moves fastest. When a platform ships drift detection or extends data quality checks, every page that listed monitoring gaps is wrong. Stored as a JSON column with monitoring slugs like drift, skew, data_quality, and explainability, list mapping renders the live monitoring matrix on every page that references the platform, with new detectors flagged from a recently_added column.

Workflow

From platform sheet to per-platform and stack pages

Build the platform sheet

Wire the platform template

Add a stack page group

Refresh on platform releases

Data in, pages out

Platform matrix in, MLOps pages out

| slug | platform | primary_cloud | model_registry | byo_compute |

|---|---|---|---|---|

| sagemaker | Amazon SageMaker | AWS | Yes (native) | Limited |

| vertex-ai | Google Vertex AI | GCP | Yes (native) | Limited |

| databricks-ml | Databricks ML | Multi-cloud | Unity Catalog | Yes |

| azure-ml | Azure ML | Azure | Yes (native) | Yes |

| kubeflow | Kubeflow | Any (OSS) | Yes (OSS) | Yes |

/mlops/{slug}/

- /mlops/sagemaker/

- /mlops/vertex-ai/

- /mlops/databricks-ml/

- /mlops/azure-ml/

- /mlops/kubeflow/

Comparison

Hand-edited platform reviews versus one synced matrix

Manual platform reviews

- Inference modes change faster than editors can patch pages

- Monitoring features disagree across pages on the same site

- Orchestrator support claims fall behind product updates

- Adding a new platform means writing a stack of pages

- Cloud provider coverage shifts each fiscal year

- Pricing models rarely propagate everywhere

SleekRank

- One row drives the per-platform page and every stack roundup

- Deployment targets and inference modes flow through to all pages

- Monitoring and registry columns stay aligned everywhere

- Pricing and BYO compute columns sync across the catalog

- Cache flush updates every page after a sheet edit

- Sitemap reflects current platforms automatically

Features

What SleekRank gives you for MLOps platform comparisons

Cloud and deployment matrix

Cloud providers and deployment targets render from JSON columns as chip grids on every page that references the platform, so a new region or inference flavor is one row edit instead of a sitewide sweep across solo and stack pages.

Monitoring transparency

Drift, skew, data quality, and explainability detectors render from a monitoring_features column, keeping observability claims honest across per-platform and per-stack pages when a vendor ships or sunsets a detector.

Stack-based page groups

A second page group from a stacks sheet generates /mlops/{stack}/ pages, joining every platform that supports a given orchestrator, registry, or feature store, with a stack-specific verdict per page.

Use cases

Who builds MLOps platform comparisons with SleekRank

MLOps consultancies

Consultancies publishing capability matrices for client buying processes keep one master sheet and serve per-platform plus per-stack pages from the same source, with capability columns aligned to vendor docs.

Cloud and ML publications

Editors maintain the master MLOps matrix, and per-platform plus stack pages follow without separate edits, so a release note propagates across the entire review set in one cache cycle.

ML training providers

Course publishers tracking which platforms align with which curriculum modules keep a structured comparison, with one sheet driving public buyer guides and internal lesson references.

The bigger picture

Why MLOps comparisons rot without a data layer

ML platform decisions are committed for years. Migrating off SageMaker or Vertex AI is not a weekend project, so buyers read comparisons closely and weigh deployment surface, monitoring depth, and registry shape against their existing cloud commitment. Manual review pages drift on these exact axes because each platform ships features on its own quarterly rhythm, not the editor's.

A page claiming Vertex AI has no online prediction autoscaling option, when it has shipped twice since the article ran, is wrong by the time a buyer finds it through search. SleekRank pins the facts to one row, so a release note is one column edit that propagates to every per-platform page, every stack roundup, and any cloud-specific cut after the cache cycle. For an MLOps consultancy or cloud publication, the result is a comparison catalog that stays accurate long enough for ML leaders to use it in a real buying process, instead of one that decays each release and silently leaks credibility.

Questions

Common questions about SleekRank for MLOps platform comparisons

Define a strict schema for what each column means. Inference modes might be limited to batch, real_time, streaming, async, and serverless, with each platform either supporting or not supporting each value. The template renders the same chip set in the same order on every page, so partial coverage is visible instead of hidden behind editorial wording. Vendor disagreements about terminology become data, not prose.

Yes. The stacks sheet has its own ranking column per stack. Per-platform pages handle solo views, and the stack ranking drives the ordered list on each /mlops/{stack}/ page. If a stack row's ranking is empty, the template can fall back to alphabetical or to a templated rank derived from a few columns, so coverage is guaranteed.

Add a deployment_model column with values like managed_saas, managed_paas, hybrid, and self_hosted_oss, then render a /mlops/open-source/ subset page filtered on self_hosted_oss. Per-platform pages cover the long tail, and the OSS page concentrates on teams who specifically want self-hosted, with the same row data driving both views.

Add columns like fine_tuning_support, hosted_foundation_models, and supported_lora_targets. These render as chips and badges on per-platform pages and feed a /mlops/fine-tuning/ cut page that ranks platforms by fine-tuning surface. The same approach works for vector DB integrations, RAG patterns, and agent runtimes.

Yes. The cloud_providers column can hold values like aws_full, gcp_full, azure_preview, and aws_limited_regions. The template renders these as chips with a tone class derived from the suffix, so a reader sees at a glance which clouds are GA versus preview versus regionally limited.

Add a pricing_model column with values like usage_based, tiered_subscription, quote_only, and free_oss, plus a starting_price_usd column. Pages render the pricing pattern honestly, and quote-only platforms show a clear callout instead of an estimated number. This sets reader expectations and avoids fictional dollar figures that mislead buyers.

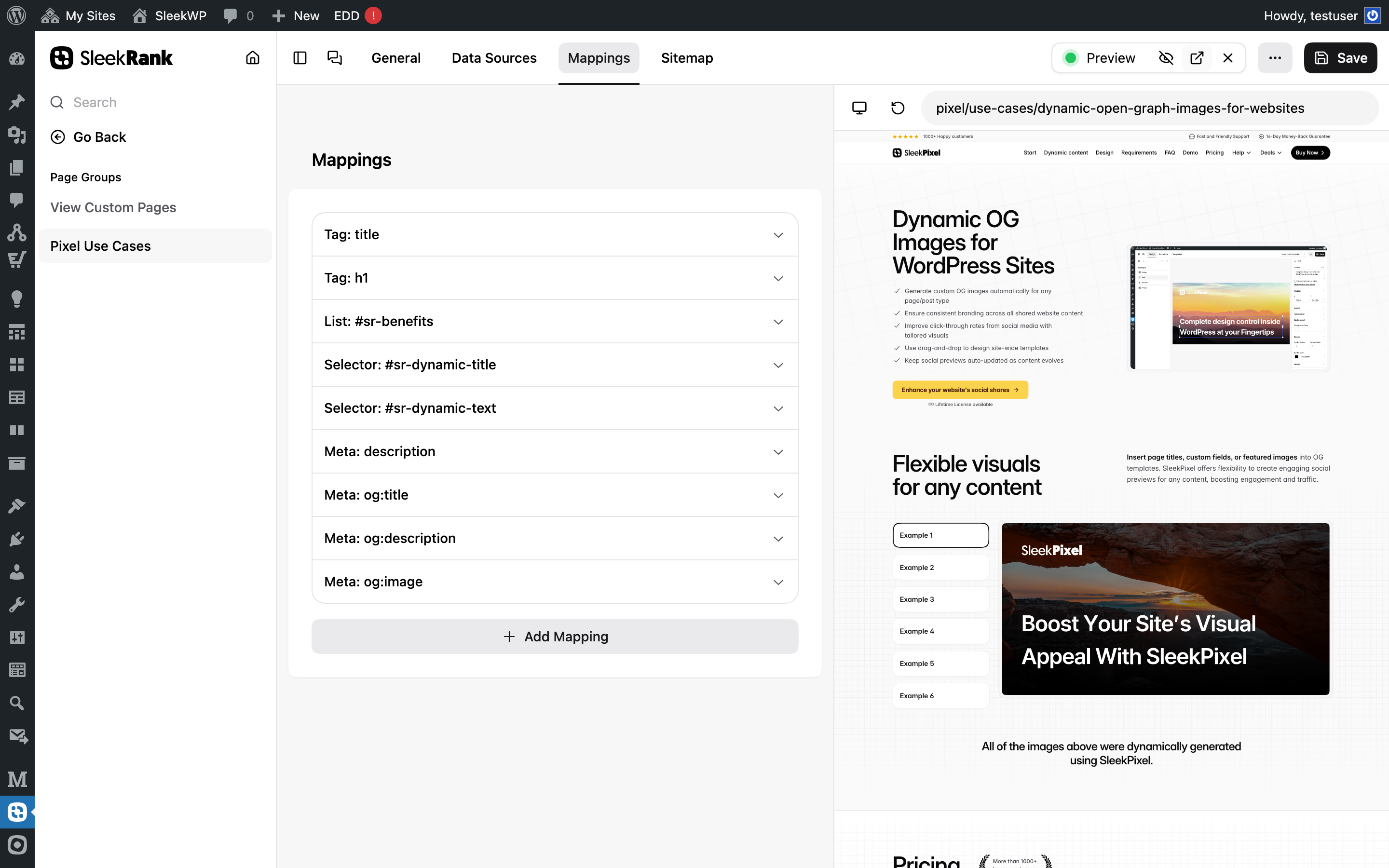

Yes. Map an image URL column to og:image via the meta type, so each per-platform page renders its own social card. For per-stack pages, the template can compose a stack badge OG. Pairing with SleekPixel lets the OG render on the fly from row data, overlaying platform name, primary cloud, and a deployment chip on a styled background.

Add a deprecated_features JSON column per platform with feature slugs and sunset dates. The template renders a small notice via selector mapping when the column is non-empty, so readers see live and deprecated coverage on the same page. As platforms sunset components, the disclosure shape stays uniform across the catalog.

Pricing

More than 1000+

happy customers

Explore our flexible licensing options tailored to your needs. Upgrade your license anytime to access more features, or opt for a lifetime license for ongoing value, including lifetime updates and lifetime support. Our hassle-free upgrade process ensures that our platform can grow with you, starting from whichever plan you choose.

Starter

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- 3 websites

- 1 year of updates

- 1 year of support

Pro

EUR

per year

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- 1 year of updates

- 1 year of support

Lifetime ♾️

Launch Offer

€299

EUR

once

further 30% launch-discount applied during checkout for existing customers.

- Unlimited websites

- Lifetime updates

- Lifetime support

...or get the Bundle Deal

and save €250 🎁

The Bundle (unlimited sites)

Pay once, own it forever

Elevate your WordPress site with our exclusive plugin bundle that includes all of our premium plugins in one package. Enjoy lifetime updates and lifetime support. Save significantly compared to buying plugins individually.

What’s included

-

SleekAI

-

SleekByte

-

SleekMotion

-

SleekPixel

-

SleekRank

-

SleekView

€749

Continue to checkout